How to Implement IT Process Automation for Enterprise Teams in 2026

A single purchase order can pass through SAP, Salesforce, and three spreadsheets before it reaches an approver. An IT service ticket can bounce between teams for days. A team member in procurement can spend an entire week on repetitive three-way matching, the manual comparison of a purchase order, invoice, and receipt. These inefficiencies span platforms, approval chains, and manual handoffs. That's why your C-suite is asking when AI-driven automation will start delivering measurable results.

The pressure is real. Hyperautomation is a staple discipline for 90% of large enterprises, and most large organizations increased automation spend in 2025. Fewer than one in eight have agentic solutions in production, and 57% of I&O leaders in infrastructure and operations have experienced at least one AI initiative failure. That gap between investment and production outcomes explains why many programs stall.

Why Do IT Process Automation Programs Stall Before Production?

For many enterprises, the pilot-to-production gap reflects structural program issues more than timing alone. Programs often stall when automation is treated as a technology deployment instead of an operating model change.

Four root causes recur across the research:

- No production pathway: Organizations lack a defined route from proof of concept to production. Without a documented route to production, programs are unlikely to scale pilots.

- Automating broken processes: Many teams attempt to automate current-state workflows rather than redesigning them for an automated environment. Automating a broken process accelerates the error rate without resolving the underlying cause. Processes often need redesign before agentic deployment.

- Missing foundational readiness: Many organizations at early maturity stages attempt workflow automation without the data hygiene, governance, and process alignment to support it.

- Governance that can't keep pace: Most AI failures trace back to people and process design, not technology. Governance failures can create broader consequences than technical issues alone.

These patterns point to the same implementation problem: enterprises are trying to scale automation before their operating models are ready.

More than 40% of agentic AI projects will be canceled by 2027, driven by escalating costs, unclear business value, and inadequate risk controls. Future-built companies deploy far more AI workflows than laggards, and the difference comes down to prioritization discipline and roadmap clarity rather than technology advantage.

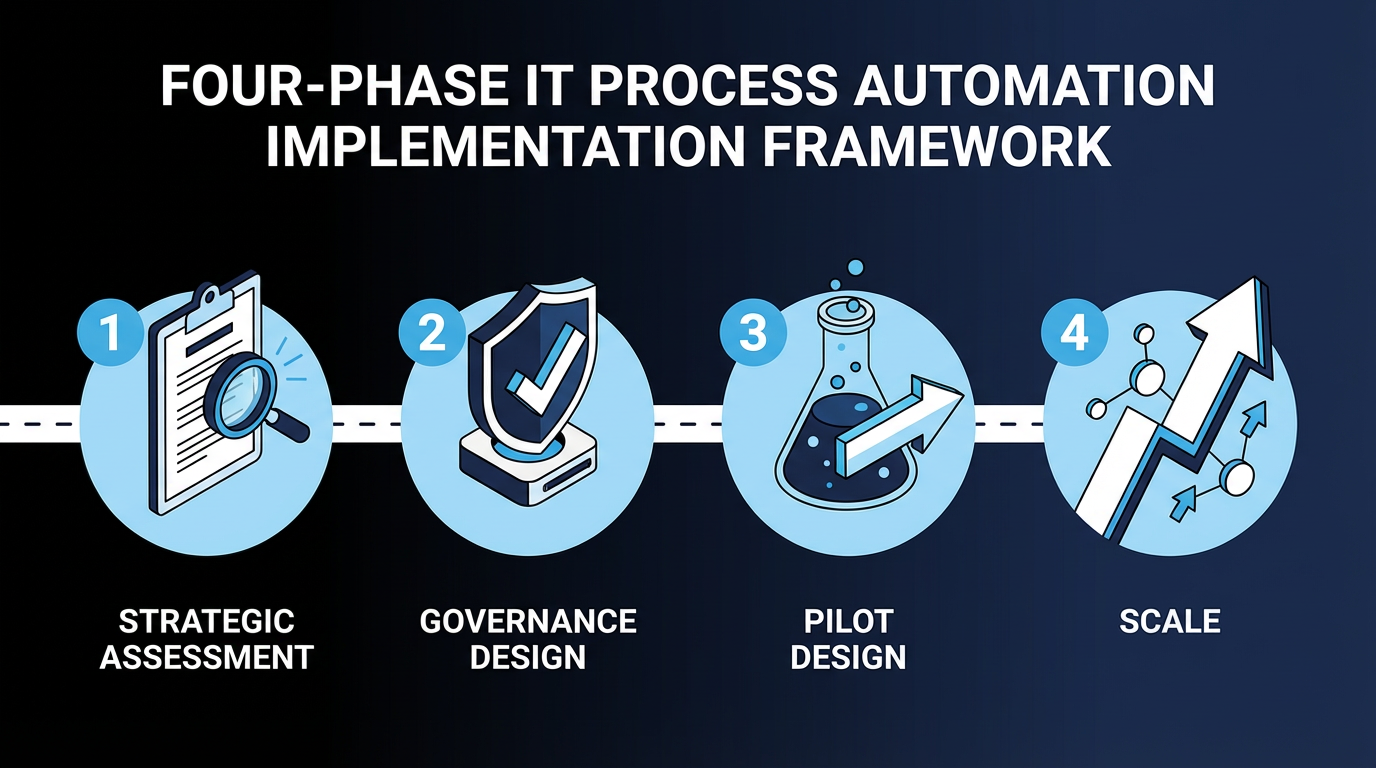

A Four-Phase Implementation Framework for IT Process Automation

Phase 1: Strategic Assessment and Process Selection

Start with an honest self-assessment of maturity before selecting which processes to automate. Without it, teams choose workflows that look attractive in demos but fail in production because data, controls, or process design are not ready.

Not every process is a good candidate for automation. Classify your workflows using a hierarchy of autonomy:

- Full autonomy: Complete automation, including L1 ticket deflection (requests resolved before they reach a human support queue), password resets, and straight-through invoice processing (invoices that move through the process without manual review). Highest return on investment (ROI) certainty, lowest governance complexity.

- Supervised autonomy: Automated with human review checkpoints, including invoice exception management, incident escalation, and vendor onboarding. Requires governance design before deployment.

- Human-only: Must remain human-controlled, including high-stakes regulatory decisions, complex negotiations, and novel exception handling.

This classification allows teams to assign different control models to different workflows. It also makes it easier to choose where rules, AI agents, and human review each belong.

Many teams begin with finance, with IT serving as a starting point before expansion. Foundational readiness, including data hygiene, governance structures, and process alignment, is a prerequisite. Address it before automation begins.

Phase 2: Governance Design Before Deployment

Governance should be designed before pilot deployment. Teams that skip this step discover approval, audit, and control gaps only after a pilot touches production data. Traditional IT governance models can be extended for AI agents that make independent decisions, though governance may need significant revision as decision-making autonomy increases.

Three non-negotiable governance requirements apply before any agent touches production data:

- Define where humans remain in control. Which decisions require human approval? At what confidence thresholds (the score or boundary at which the workflow routes work to a person instead of acting on its own)? Which records of agent behavior must be retained?

- Address shadow AI explicitly. Unsanctioned AI deployments create governance blind spots where autonomous agents access sensitive data and interact with other enterprise platforms. Governance design must extend shadow IT monitoring policies to cover AI deployments.

- Embed senior leadership ownership. Enterprises in which senior leadership actively shapes AI governance achieve greater business value than those that delegate it to technical teams.

These requirements determine who can deploy automation, what controls apply, and how exceptions are handled. As scale increases, many organizations move from shared committee review toward clearer role-specific accountability, because a committee-as-gatekeeper model becomes a bottleneck when every deployment waits on the same approval path.

Phase 3: Pilot Design with a Production Pathway

A pilot should use real data, real users, and real processes in a production environment. A production-first pilot exposes integration, governance, and change-management issues early, when they can still be corrected without reworking the whole program.

Two findings from primary research should shape your pilot design.

First, pilots built through strategic partnerships are more likely to reach full deployment than those built internally, with employee usage rates nearly double for externally built tools.

Second, the defined steps from proof of concept to production, the "route to live," must be documented during design, not after pilot results are known. Organizations without a clear documented route to live are much less likely to complete the pilot-to-scale transition.

Both findings point to the same practical rule: a pilot tests both the workflow and the path to production.

Phase 4: Scale with Continuous Governance

Scaling requires more than deployment volume. Production programs require continuous monitoring for model drift, the gradual decline in output quality as conditions change, and for policy violations. Governance controls must be embedded in enterprise risk management. Ongoing program management handles control attestation, documented evidence that required controls are operating as intended, and regulatory readiness.

Scaling also requires organizational change: different processes, different roles, different decision rights. Many operations leaders identify adapting workers to new automation environments as a top concern. If the operating model does not change with the workflow, deployment volume alone will not produce durable adoption.

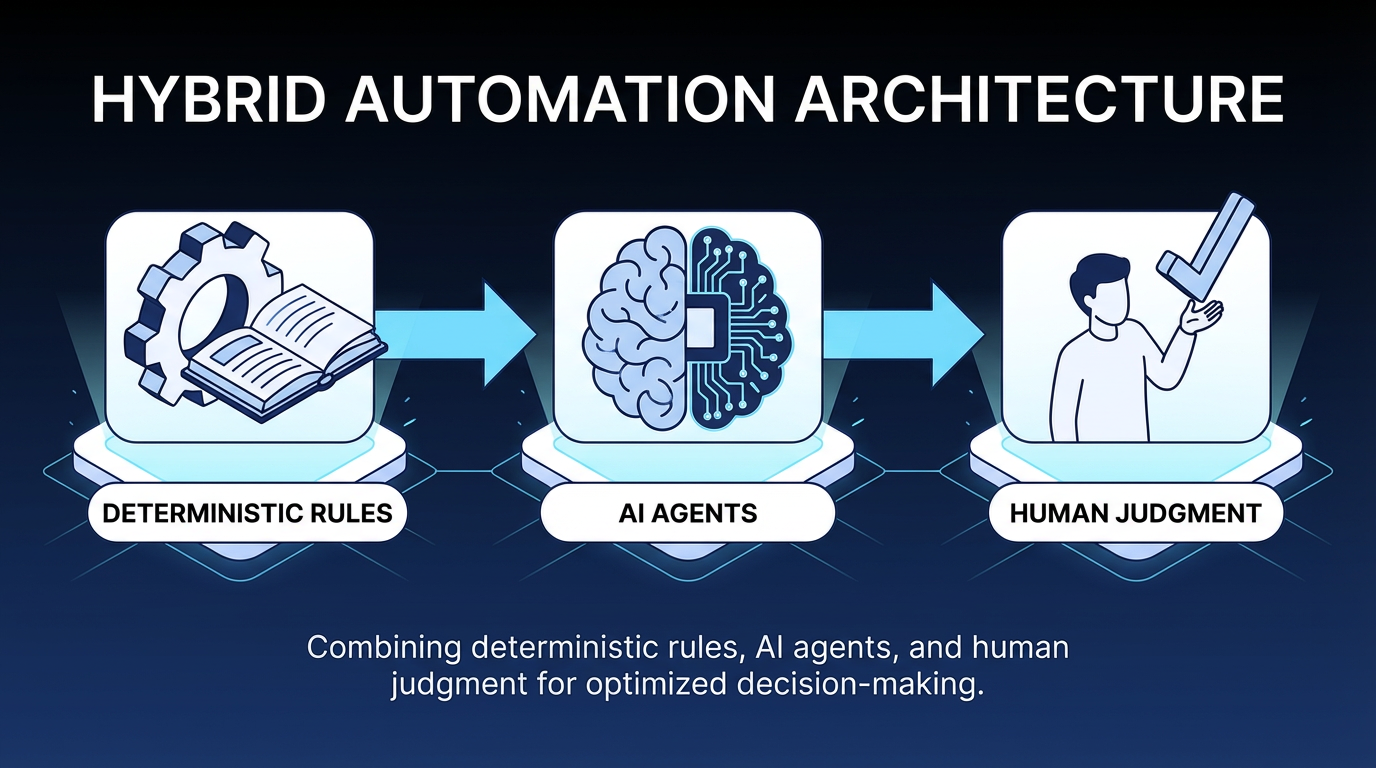

Which Architecture Works for Enterprise IT Process Automation?

Enterprise production architecture for IT process automation combines deterministic automation, AI agents, and human judgment. Each type of decision has a defined place in the workflow.

- Deterministic rules govern high-stakes, auditable decision points where consistency is required. An approval routing policy, a compliance check and a three-way match: these follow the same logic every time and should produce the same result.

- AI agents handle reasoning, pattern recognition, and recommendation generation within constrained boundaries. Reading an unstructured supplier contract, classifying an incident ticket and flagging an invoice discrepancy: these are tasks that AI supports.

- Human judgment remains in place for decisions that exceed agent confidence thresholds or affect regulated outcomes. At enterprise scale, complex and high-stakes decisions will always require human judgment. Scaling AI agents requires treating them as governed participants with defined roles, explicit oversight structures, and escalation paths.

This architecture reduces the risk of pushing probabilistic agents into steps that require deterministic control.

Pure agent-based approaches struggle to scale in enterprise settings because multi-step workflows compound errors, create governance challenges, and reduce predictability compared with hybrid designs. The highest-complexity scenarios, including auto-remediation, self-healing infrastructure, and agent-led cross-system workflow management, are precisely where the gap between potential and performance is largest. Multi-agent architectures introduce failure modes that did not exist in earlier automation: agent-to-agent communication breakdowns, role conflicts over shared data, and shared-state management issues across long-running processes.

The hybrid architecture also affects cost through workflow design. Right-sizing each step changes the cost profile at scale. Use rules where consistency is needed, agents where reasoning is needed, and humans where judgment is required.

What IT Process Automation ROI Looks Like in Practice

Operations leaders building an internal business case need dollar-denominated evidence. Documented outcomes from Tier 1 analyst studies provide realistic benchmarks:

- ROI and payback: Enterprise robotic process automation (RPA) deployments achieve a documented 248% ROI over three years with a payback period under six months, according to Forrester TEI analysis. AI implementations show similarly strong returns.

- Annual financial value: Organizations that move automation beyond pilots and into production workflows consistently report that financial returns compound across functions, as each newly automated process reduces manual handoffs and frees team capacity for higher-value work.

- Cycle time reduction: In enterprise implementations, AI agent-assisted workflows can reduce cycle times meaningfully in enterprise resource planning (ERP) and customer relationship management (CRM) contexts, with broader finance and procurement use cases showing similar results.

- Full-time equivalent (FTE) reallocation: Organizations use automation to reduce administrative work and redeploy team capacity toward higher-value activity.

These metrics give teams a starting point for internal planning, but they also set a standard for proof. Business cases should connect ROI, cycle time, and labor reallocation metrics to a specific production workflow rather than to AI adoption in general.

The business case also needs a clear view of implementation risk. The cost of a failed implementation extends beyond program spend and can erode organizational confidence in future automation efforts.

How Elementum Supports IT Process Automation

The framework above, which covers deterministic governance, hybrid architecture, and production-first deployment, outlines what enterprise programs need to reach production. Our AI Workflow Orchestration Platform is built around these principles.

Our Workflow Engine treats humans, business rules, and AI agents as equal first-class participants in the same workflow. The no-code, drag-and-drop builder enables teams to configure, modify, and extend workflows without filing IT tickets or waiting on vendor engineering cycles. We help build the first workflow, then your team takes over.

Our AI Agent Orchestration capabilities assign the right model to each workflow step, including OpenAI, Google Gemini, Anthropic, Amazon Bedrock, and Snowflake Cortex, while allowing human approval when needed. Every agent action is logged, and enterprise compliance controls for Sarbanes-Oxley (SOX), the Health Insurance Portability and Accountability Act (HIPAA), and the General Data Protection Regulation (GDPR) govern those actions.

Regarding data governance, our patented Zero Persistence architecture directly addresses a common barrier to enterprise adoption. Our CloudLinks queries your data in real time, where it already lives, including Snowflake, Databricks, BigQuery, and Redshift, with row-level and column-level security policies governing access. Workflows can still orchestrate actions across enterprise platforms via API integrations; CloudLinks specifically refers to data access within the underlying data environment.

For operations leaders under pressure to show results within a single budget cycle, our deployment model is built for production in 30 to 60 days.

The programs that reached production share the same design: deterministic rules where consistency is required, AI agents where reasoning is needed, and governance built before the first workflow goes live. We're built for that sequence. We never train on your data, replicate it, or warehouse it. Contact us to discuss how it applies to your environment.

FAQs About IT Process Automation

What Is the Difference Between RPA, Intelligent Automation, and Process Orchestration?

The difference is scope. Robotic process automation (RPA) executes scripted, repetitive tasks on structured data. Intelligent automation adds AI, machine learning (ML), and natural language processing (NLP) to handle unstructured data and context. Process orchestration coordinates end-to-end workflows across multiple tools, participants (people, rules, and agents), and decision points.

How Long Does Enterprise IT Process Automation Implementation Take?

Pilot timelines vary by scope and organizational maturity. Simple, well-defined workflows can reach production in weeks; broader cross-functional programs typically run three to 12 months, with governance design and process redesign consuming most of that time. The pilot-to-production transition is where most programs lose time. Our deployment model is designed for production in 30 to 60 days.

Do We Need to Replace Legacy Systems to Implement IT Process Automation?

Usually, no. Process orchestration is designed to wrap legacy systems, not replace them. Teams often assume that existing platforms must be replaced rather than integrated, but the practical path is to start by integrating across current tools.

How Do We Avoid Pilot Purgatory?

Define production readiness criteria before pilots begin. Establish a formal portfolio governance function with budget accountability for production transitions.

Keep Reading

The Enterprise Guide to Agentic AI for ITSM

9 IT Process Automation Tools: Top Platforms for Automating IT Workflows

Deterministic vs. Probabilistic AI: The Enterprise Guide to Building Workflows That Scale

AI Guardrails: How to Govern What AI Agents Can Access, Decide, and Do

Human-in-the-Loop vs. Human-on-the-Loop: When to Use Each for Enterprise Workflows

Human-in-the-Loop Agentic AI: How Enterprise Teams Deploy Agents Without Losing Control