How Can AI Analyze and Score Supplier Bids?

Enterprise sourcing events often generate dozens of supplier bids, each formatted and written differently. Procurement teams rarely have time to analyze every submission in depth, so many bids are skimmed or compared inconsistently. Manually normalizing formats, mapping terms, and aligning scores across vendors takes hours that procurement budgets and timelines rarely accommodate.

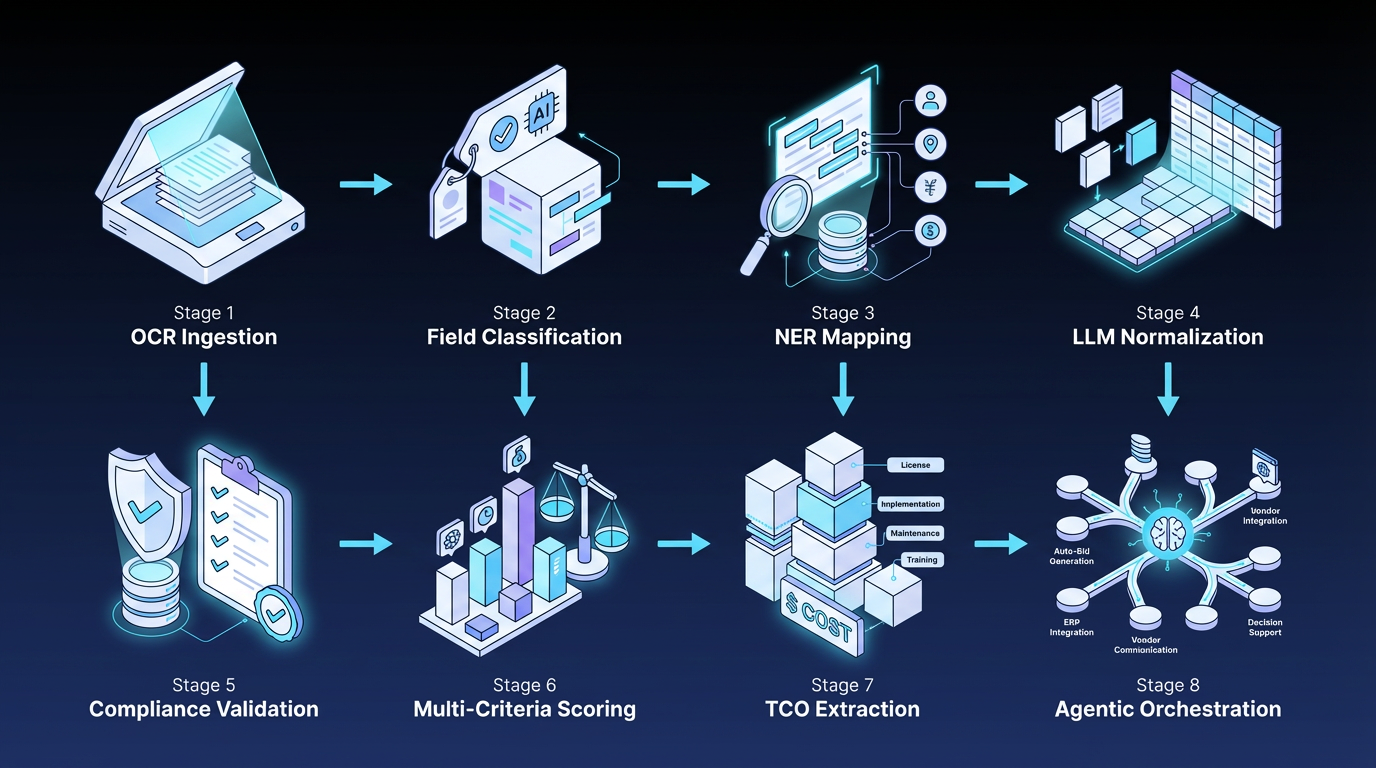

This guide walks through how AI analyzes and scores supplier bids in practice. It explains an eight‑step pipeline that handles document ingestion, entity extraction, compliance checks, scoring, and risk assessment, then shows how orchestration ties these steps together into an auditable workflow.

What Is AI Bid Analysis?

AI bid analysis uses machine learning and natural language processing to evaluate supplier submissions at scale. Procurement teams use it to reduce the time spent manually reading proposals, extracting comparable data points, and scoring bids in spreadsheets.

It works across common bid content, such as pricing tables, technical specifications, compliance certifications, past performance data, and narrative responses to evaluation criteria. It supports the formats suppliers typically submit, like PDFs, Word files, Excel workbooks, and scanned images, so suppliers do not need to conform to a single schema for the system to process their bids.

How the AI Bid Analysis Pipeline Works in 8 Stages

AI bid analysis is not a single model that reads a bid and returns a score. It is a multi‑step pipeline in which different mechanisms handle distinct tasks such as document ingestion, data structuring, compliance validation, scoring, and risk assessment. Each stage feeds into the next, and the sequence is designed to mirror how procurement teams already evaluate bids, but at scale.

In this pipeline, compliance filtering runs before scoring. Non‑compliant bids are removed early, so evaluators see only submissions that meet mandatory requirements.

The eight mechanisms below show how the stages fit together in a working pipeline:

1. Document Ingestion and Field Classification

The system converts each bid into machine-readable text, then classifies content into structured fields such as pricing, specifications, and compliance attestations. Sorting content at the ingestion stage means that pricing data routes to the Total Cost of Ownership (TCO) model, compliance language routes to the validation model, and technical specifications route to the scoring model, without manual tagging.

Ingestion also surfaces early data-quality issues, such as arithmetic errors in pricing totals, missing required fields, and formatting problems that would force evaluators to go back to the supplier mid-review.

2. Named Entity Recognition and Semantic Normalization

Named Entity Recognition (NER) identifies specific entities in bid text, including product names, service categories, quantities, delivery terms, payment structures, and warranty periods.

Semantic normalization then maps vendor-specific terminology to the buyer's standard procurement concepts, so "next-business-day delivery" in one bid and "T+1 shipping" in another resolve to the same commitment. Without this layer, side-by-side vendor comparison breaks down whenever suppliers use different languages for the same contractual promise.

3. Large Language Model Normalization

Narrative responses to evaluation questions do not fit into entity extraction, which is built for structured data points. Large language model (LLM) normalization handles these free-form answers by converting them into a common schema aligned with the buyer's requirements.

In enterprise deployments, this often combines language models with retrieval from internal policies and prior contracts so the model can structure responses against the buyer's specific evaluation criteria. Evaluators still need to validate LLM outputs before accepting them, particularly for narrative content tied to contract value, regulatory commitments, or service level agreement (SLA) language.

4. Automated Compliance Validation

Compliance validation scans each bid against a structured requirements matrix that includes mandatory certifications, regulatory language, insurance coverage, and SLA commitments. The output is a pass/fail determination for each requirement with a link to the exact document location where the evidence appears.

Procurement teams can then disqualify non-compliant bids before the scoring stage, keeping evaluators' time focused on bids that have already met the mandatory requirements.

5. Weighted Multi-Criteria Scoring

Multi-criteria scoring generates quantitative scores across evaluation dimensions configured by the procurement team, such as price, technical fit, implementation timeline, and sustainability commitments. Each dimension carries a weight set by the sourcing team, and the model applies those weights to produce a ranked shortlist.

The system preserves an audit trail capturing the scoring logic, the bid content that produced each score, and any manual adjustments evaluators made during review.

6. Total Cost of Ownership Extraction

Total Cost of Ownership (TCO) extraction runs alongside scoring; a separate model aggregates base price, delivery cost, installation or implementation cost, ongoing maintenance, and any surcharges into a single normalized figure for each supplier.

In a documented public-sector case, an agency automated landed-cost analysis across all vendors rather than limiting detailed TCO review to a few finalists, and the broader coverage yielded savings in a category that manual review had been too narrow to handle.

7. Supplier Risk Assessment

Risk assessment draws on data outside the bid document to evaluate financial, operational, and reputational risks. Inputs commonly include supplier financial filings, past delivery performance, news sentiment, sanctions screening, and cybersecurity posture data.

A review of supply chain risk modeling catalogs the main algorithm types used in procurement, including classification models for compliance screening and graph‑based models that reveal concentration risk across the broader supply chain.

8. Agentic Orchestration

Agentic orchestration coordinates the previous seven stages as a multi-step workflow rather than a single model call. Autonomous agents route work through the extraction, validation, scoring, and approval steps, and human checkpoints sit at the points where judgment, accountability, or contract value requires a reviewer. Every agent action, model version, and human decision is logged against its workflow step, which is what makes the end-to-end pipeline reviewable.

Business Outcomes of AI-Driven Bid Analysis

Evidence on AI in procurement remains limited, and most published data measure procurement outcomes at the function level rather than at the bid-evaluation stage. The three consistently reported outcomes are cost reduction, shorter cycle time, and more accurate scoring.

- Cost reduction: Operating efficiency gains, reduced spend waste, and category savings appear in some use cases, according to McKinsey procurement outcomes research. The clearest bid‑analysis‑related example is the landed‑cost case described above, where broader vendor coverage yielded savings in a category that manual review had been too narrow to consistently cover.

- Faster cycle time: AI can reduce turnaround in procurement workflows by automating parts of intake, extraction, comparison, and routing, though results vary by use case and workflow design.

- More consistent scoring. AI-driven request for proposal (RFP) scoring has shown more consistent evaluation patterns in government procurement contexts. The mechanisms that produce this consistency, including standardized scoring criteria and repeatable metrics, apply equally to commercial procurement, even though most published examples come from public-sector settings.

Industry adoption data show that more organizations are integrating AI into enterprise workflows, though the extent of deployment varies widely.

Governance Risks to Consider in AI Bid Scoring

Governance constraints typically show up in system integration, data quality, and operating controls. Each directly affects whether bid-analysis outputs can be trusted, reviewed, and used inside existing procurement processes. These three risks need architectural controls built into the workflow from the start:

- Algorithmic bias in scoring: bias can enter through training data, model design, or how evaluators interpret model outputs, and a model trained on sourcing that favored incumbents or specific geographies will reproduce that pattern. Common mitigations include more representative training data and explicit governance over recommendation logic.

- Auditability of automated decisions: decision records need to capture the model version, the data analyzed, the weights applied, and any human modifications. Without that trail, AI-generated scores may not survive audit scrutiny, and as procurement AI moves toward multi-step agentic workflows, each autonomous step needs to be reviewable and attributable.

- Bid data confidentiality: supplier pricing and technical approaches are competitively sensitive, so the provider's data-use and security terms require careful review before submissions are entered into the system. The choice of AI architecture, specifically whether the system stores, replicates, or trains on supplier data, is what determines confidentiality.

How Elementum Orchestrates AI for Procurement Bid Analysis

Three things in a procurement workflow each need different handling:

- Compliance pass/fail checks need to produce the same result every time

- Scoring and document summarization require language models and contextual reasoning

- Human reviewers need configurable checkpoints tied to contract value and risk exposure

Our AI Workflow Orchestration Platform addresses this through our Three-Participant Model, where humans, deterministic business rules, and AI agents operate as equals in every workflow.

Our Workflow Engine uses a visual, no-code builder in which compliance gates operate as deterministic rules (fixed logic that AI agents cannot override). Natural language processing (NLP) extraction and scoring steps route to the appropriate AI model. Configurable confidence thresholds govern when agents act autonomously versus when human review is required. Every action is logged and revocable.

Our Zero Persistence architecture uses real-time querying rather than persistent storage. CloudLinks query your data in real time within your own cloud environment, including Snowflake, Databricks, BigQuery, and Redshift. Supplier pricing submissions and technical approaches stay within your infrastructure. We never train on your data, never replicate it, and never store it in a warehouse.

We are pre-integrated with OpenAI, Google Gemini, Anthropic, Amazon Bedrock, and Snowflake Cortex. We connect to SAP, Oracle, and Salesforce through APIs. You select the right model for each step, swap models as pricing or capability shifts, and the workflow logic stays intact.

Enterprises typically start with a scoped procurement workflow and expand to adjacent functions as the orchestration layer proves out. Contact us to map workflow orchestration into your architecture and the rest of your AI roadmap.

FAQs About How AI Analyzes and Scores Supplier Bids

These are the questions that procurement, finance, and IT leaders most often raise when scoping AI workflow automation.

Can You Fully Replace Human Judgment in Supplier Bid Evaluation With AI?

You cannot fully replace human judgment in supplier bid evaluation with AI. AI supports extraction, matching, and structured comparison, but qualitative judgment, including strategic fit assessment, nuanced risk interpretation, and relationship dynamics, remains a human responsibility. Procurement governance also emphasizes controls and oversight when procurement teams use AI in decision-support workflows.

What Types of Evaluation Criteria Can You Score Effectively With AI?

Evaluation criteria you can score effectively with AI include structured, rule-based factors such as technical compliance, pricing competitiveness, delivery timelines, sustainability metrics, environmental, social, and governance (ESG) disclosures, and contractual deviations. For more complex qualitative dimensions, organizations still need human review to interpret responses, identify weaknesses, and surface subtle risks.

How Can You Keep Bid Data Secure When Using AI?

Keeping bid data secure when using AI depends on where the system stores and processes your data. On-premises AI deployments and cloud-based Zero Persistence architectures, which query data within the customer's environment without replicating it to the vendor's systems, can protect bid confidentiality. The core question to ask your AI provider is whether the system stores, replicates, or trains on your supplier submissions.

Does AI Bid Scoring Introduce Compliance Risk Under SOX?

AI bid scoring can pose compliance risks under the Sarbanes‑Oxley Act (SOX) when it affects the scope of Internal Controls Over Financial Reporting (ICFR). Any AI involvement in financially material decisions should be accompanied by documented controls, reviewability, and human oversight.

How Accurate Is AI Bid Scoring Compared to Manual Evaluation?

AI bid scoring, with high-quality training data, can produce more standardized outputs than free-form manual review, particularly when the process uses defined scoring criteria and feedback loops. Standardized scoring supports more consistent evaluations across reviewers. Consistency improves when procurement teams replace free-form qualitative assessments with repeatable metrics tied to defined criteria.

Keep Reading

The Enterprise Guide to Agentic AI for ITSM

What Is Agentic AI Orchestration? An Enterprise Guide

Deterministic vs. Probabilistic AI: The Enterprise Guide to Building Workflows That Scale

Human-in-the-Loop vs. Human-on-the-Loop: When to Use Each for Enterprise Workflows

AI Guardrails: How to Govern What AI Agents Can Access, Decide, and Do

How to Use AI for Intake Management and Orchestration