What Is Agentic AI for the Enterprise?

Most enterprises are experimenting with AI agents. Over 40% of those projects are projected to be canceled before reaching production value. What separates the projects that ship from the ones that stall is rarely the model itself. It comes down to workflow architecture, governance, and execution design. Additionally, a clear understanding of what agentic AI actually is, and where category definitions blur, plays a key role in whether projects make it to production.

What Agentic AI Is and Isn't

Agentic AI systems set or interpret goals, plan multi-step actions, use tools, make decisions based on feedback, and adapt over time to complete tasks.

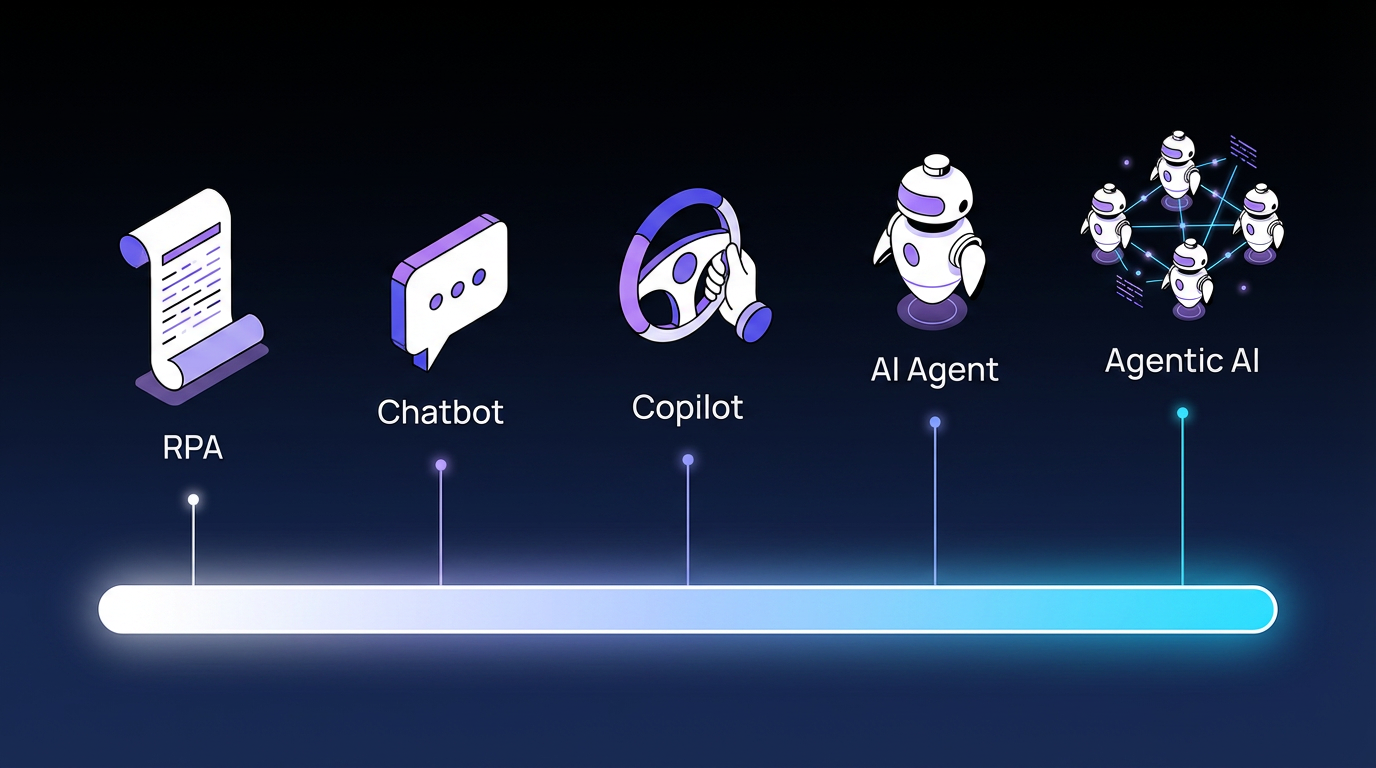

An AI agent can initiate and complete tasks without step-by-step human prompting. That behavior is what separates an agent from a chatbot, a copilot, or Robotic Process Automation (RPA). A chatbot responds when prompted. A copilot assists while a human drives. RPA executes predefined scripts. An AI agent perceives its environment, reasons about what to do, and acts without a human pressing a button at each step.

This distinction is often blurred in the market. Software vendors represent products as autonomous agents when they function more like copilots, automation tools, or chatbots.

Agentic AI vs. AI Agents

The terms agentic AI and AI agents are often conflated, but they refer to different scopes.

An AI agent is a single autonomous entity that can perceive, decide, and act. Agentic AI refers to a system that behaves agentically and may use one or more agents, tools, and workflow components. A multi-agent setup is one way to build agentic AI. It is not the definition.

This distinction is important when you’re evaluating AI vendors. You are either buying a point solution for one task or an orchestration layer that coordinates agents, tools, and human decisions across a workflow.

How Agentic AI Works: Architecture and Core Components

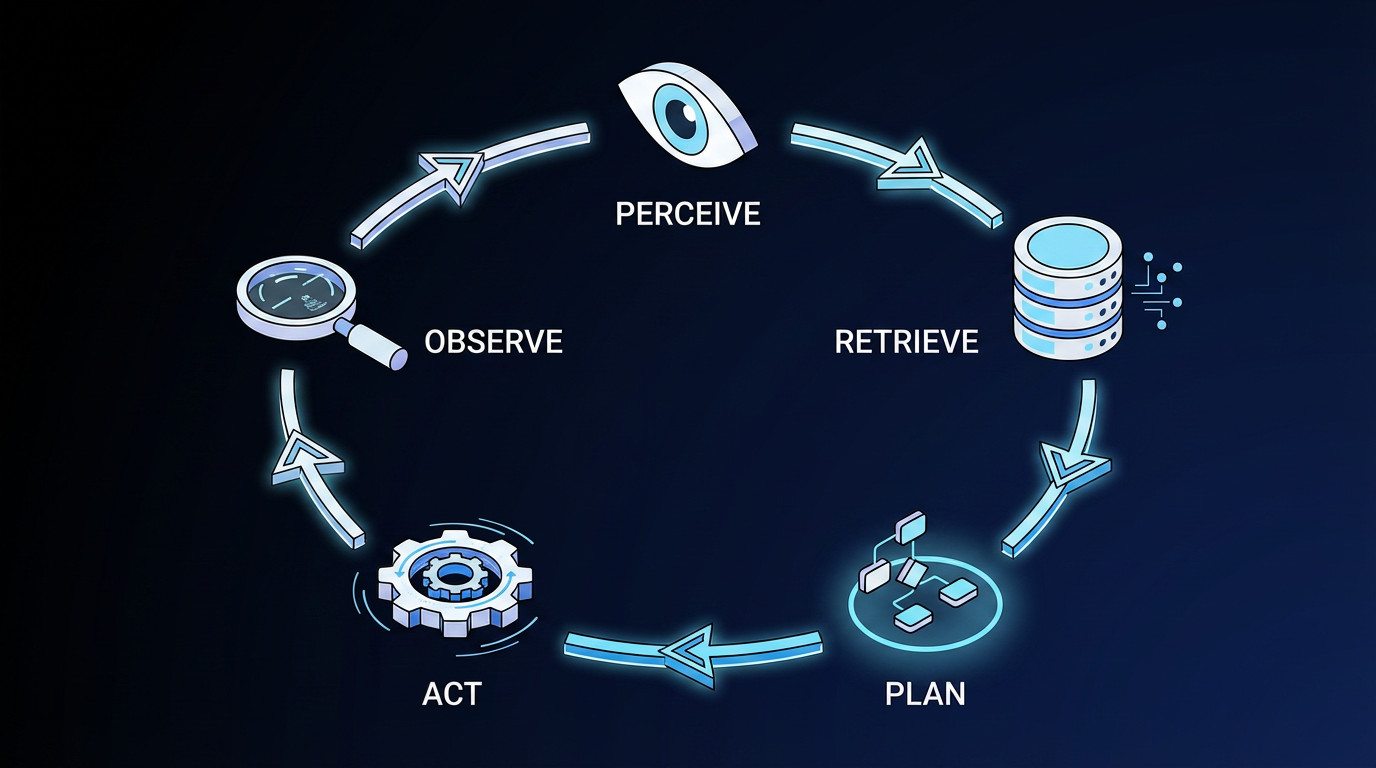

Every agentic system runs a closed-loop control architecture: perceive, retrieve, plan, act, observe.

The agent receives a structured view of the current state, decides on an action, executes it through a tool or protocol interface, observes the result, and updates its internal plan. This is not a single-pass generation like a chatbot response. It is an iterative reasoning loop that continues across multiple steps.

Planning and Reasoning

Planning is the process by which an agent decides what to do next. It is the step where a goal is translated into a sequence of intermediate actions that the agent can execute, evaluate, and adjust as results come in.

One common planning approach is ReAct, which interleaves reasoning traces with tool actions in a single generation loop. The agent thinks through intermediate steps before acting, a technique called chain-of-thought reasoning.

Teams building agentic systems typically choose between three design approaches:

- Single-loop designs: one agent reasons and acts

- Hierarchical designs: a planning agent coordinates executor agents

- Multi-agent designs: subtasks route across specialized agents with distinct tool sets and permission scopes

Tool Use and API Integration

Tool integration is how agents extend beyond language generation into real-world action. When a large language model (LLM) uses a tool, it outputs a structured specification of the tool name and arguments. A separate deterministic runtime layer then executes the call.

Probabilistic behavior remains within the reasoning step, while execution remains deterministic. Standardization layers for agent-tool interfaces are emerging, reducing the custom integration work per tool. However, authentication complexity is increasing rapidly as agents connect to more systems, each with its own credentials, scopes, and audit requirements.

Memory Systems

Agents maintain multiple types of memory:

- Working memory holds the current task state in the active context window

- Episodic memory stores past interaction history

- Semantic memory captures domain facts and stable knowledge

- Procedural memory records how to perform recurring tasks

Feedback and Self-Correction

Agents use structured reflection to recover from errors. Systems that log every step of execution and apply a recovery layer on top run more reliably across multi-step tasks. A single confidence score is not always enough to catch edge cases, so production systems typically layer output validation on top of model responses.

Why Deterministic Workflows Are Required for Enterprise AI

LLM-based agents are probabilistic by design. The same input can produce different outputs across runs. For a chatbot, that variance is a minor inconvenience. For a finance workflow subject to a Sarbanes-Oxley (SOX) audit, the same variance becomes a compliance risk or a control failure.

Agentic AI systems operating in regulated enterprise environments require deterministic behavior: the same input must produce the same output every time, with an auditable trail. In practice, most enterprises have not built that determinism into their AI agent stack. They plug an LLM into a workflow and discover the governance problem only once auditors, legal, or finance start asking questions.

The solution is a deterministic backbone that governs the process, with probabilistic AI applied only at steps where interpretation and reasoning add real value. Without that backbone, running every workflow step through an LLM breaks at scale:

- Reliability decays across long multi-step workflows when every step is probabilistic

- Regulatory compliance demands reproducible audit records that probabilistic systems cannot consistently produce

- Costs escalate when every workflow step routes through an LLM, regardless of whether a simple rule would suffice

5 Agentic AI Use Cases for Enterprise

The use cases below represent domains in which AI reasoning, combined with deterministic governance, yields the most reliable outcomes.

IT Service Management

IT service management (ITSM) is one of the strongest early fits for agentic AI because the work already has defined states, service levels, and escalation paths.

Agentic workflows in ITSM handle repetitive classification, ticket routing, and knowledge retrieval, while human teams retain oversight of escalations and novel incident types. This workflow reduces handling time and mean time to resolution (MTTR), with the largest gains appearing in environments where ticket volume makes manual triage a consistent bottleneck.

Customer Service

Multi-agent customer service workflows coordinate across inbound handling, intent identification, appointment scheduling, and self-service resolution. AI agents handle the structured, repeatable portions of the interaction while humans manage judgment-intensive cases, keeping response quality high without adding headcount for volume spikes.

Procurement and Source-to-Pay

Procurement workflows benefit from agentic AI across supplier selection, Request for Proposal (RFP) management, contract review, and compliance screening. AI agents handle document-heavy intake and classification. Deterministic rules govern approval routing and compliance checks, so the orchestration layer carries the auditable record that procurement and finance teams need.

Finance Operations

Agentic AI in finance operations supports document-heavy review and monitoring workflows, handling intake, classification, and precedent identification while humans retain final review authority. Finance is one of the highest-value domains for this architecture because the combination of high document volume and strict audit requirements makes deterministic governance a hard requirement, not a nice-to-have.

HR Operations

Agents handle inquiry routing, onboarding task execution, and process workflows. Humans retain judgment-intensive decisions such as performance reviews, policy exceptions, and sensitive case escalations. The value lies in the structured, repeatable work: status inquiries, document collection, system provisioning, and routine approvals that previously required manual handling at each step.

What Are the Governance and Security Risks of Agentic AI?

The Open Web Application Security Project (OWASP) is a nonprofit organization that publishes widely used security standards for enterprise applications. Its OWASP Top 10 for agentic applications documents risks already appearing in real-world deployments:

- Tool misuse

- Access control violations

- Cascading failures

- Identity impersonation

- Memory manipulation, where malicious data persists in the agent memory to influence future sessions

Prompt injection is the risk that gets the most attention, and for good reason. In a chatbot, a prompt injection attack produces a bad answer. In an agent, malicious instructions planted through user input or external data, such as websites, documents, or emails, can hijack the agent's behavior and trigger downstream tool calls, application programming interface (API) requests, and actions across multiple systems.

An agentic-specific regulatory framework has yet to emerge as the enterprise standard, which means organizations must build their own governance infrastructure as regulation develops. Human-in-the-loop controls, graduated kill switches, and real-time anomaly detection are the core controls enterprises are implementing today to minimize risk, sitting alongside immutable audit trails

Token costs are the other part of the governance problem, and they compound quickly at enterprise scale. Agentic workflows charge for every reasoning step, every retry, and every tool-use sequence, not just the final response.

A simple ticket classification that would cost a fraction of a cent through a rules engine can cost many multiples of that when routed through an LLM on every run. Retries on failures further multiply the bill. Legacy billing and financial operations (FinOps) tools were not built to expose those cost drivers at the workflow level, so cost overruns are usually caught after the fact rather than before.

For enterprises deploying agents across multiple business units without a cost governance layer, token spend becomes the line item nobody forecasted, and everybody has to explain.

How Elementum Applies Deterministic Orchestration to Agentic AI

Enterprise workflows need deterministic governance for auditability, reproducibility, and cost control, with AI agents applied at the specific steps where reasoning and interpretation add value. Without that governance layer, agent sprawl, cost escalation, and audit gaps compound at enterprise scale.

Elementum's Workflow Engine is built around that architecture. It treats humans, business rules, and AI agents as equal participants in every process. Deterministic rules handle the steps that require consistency (routing, approval logic, compliance checks), while AI agents handle interpretation tasks such as document processing, classification, and data analysis. The result is a workflow in which consistent steps remain under control, and AI is applied where it adds value, rather than defaulting to premium LLM calls across the board.

Our AI Agent Orchestration platform connects to any AI agent and is pre-integrated with OpenAI, Anthropic, Gemini, Amazon Bedrock, and Snowflake Cortex, with no LLM vendor lock-in. Configurable AI and human decision thresholds with confidence scoring determine which decisions route to human review and which run automatically, and every agent action is logged with a full audit trail. Built-in guardrails and input validation defend against prompt injection across every model interaction.

Data handling is treated as part of workflow design, not a separate concern. Our patented Zero Persistence architecture keeps your data yours: we never train on it, never replicate it, and never warehouse it. CloudLinks queries your data in real time, where it already lives, with no copies, no syncing, and no new warehouses.

For enterprise AI leaders building the business case now, the decision is how to deploy agentic AI within a governance model that holds. Outcomes need to stay auditable. Costs need to be right-sized. And models need to stay swappable without rebuilding the underlying process.

Contact us to talk through your agentic AI roadmap.

FAQs About Agentic AI

How Is Agentic AI Different From Generative AI?

Generative AI creates content such as text, images, or code based on a prompt. Agentic AI goes further by interpreting goals, planning multi-step actions, using tools, and executing tasks autonomously. A generative AI model writes an email. An agentic AI system reads a customer complaint, classifies it, routes it to the right team, and triggers a follow-up workflow.

How Do You Prevent Agent Sprawl in Enterprise AI Deployments?

Agent sprawl occurs when business units deploy AI agents independently, without unified orchestration, governance, or visibility across deployments. Preventing it requires an orchestration layer that governs what agents can access and do, routes decisions to humans at defined thresholds, and produces an audit trail across every agent action.

What's the Difference Between Agentic AI and RPA?

RPA executes predefined scripts against structured interfaces: if X happens, do Y. It has no reasoning capability and breaks when interfaces change. Agentic AI interprets goals, plans its approach, and adapts to feedback, which makes it well-suited for unstructured tasks such as reading contracts or classifying ambiguous requests. Many enterprise workflows need both: deterministic rules for consistent steps and AI agents for interpretation where judgment is required.

How Long Does Agentic AI Take to Implement and Show Results?

It depends on the vendor your company is working with. Organizations that connect agentic workflows to existing systems without data migration or rip-and-replace typically reach production for the first workflow in 30 to 60 days. Delays usually happen when companies start with a use case that's too complex for their governance architecture, or try to automate before validating accuracy in supervised mode. Starting with a high-frequency, well-defined workflow gives teams a production-ready baseline to build from.

Does Agentic AI Require Companies to Replace Their Existing Tech Stack?

Not always. Many enterprises keep their existing tech stack and layer an orchestration platform on top of it with the right governance, authentication, and audit trails.

Others use agentic workflows to replace certain platforms outright. Elementum, for example, can serve as a ServiceNow alternative for companies looking to consolidate. The real question is whether your orchestration layer gives you enough control over what agents can access and do across your stack, and whether the platforms you already run are still earning their keep.

Keep Reading

The Enterprise Guide to Agentic AI for ITSM

Agentic AI vs. Generative AI: Why Enterprise Workflows Need Both

Deterministic vs. Probabilistic AI: The Enterprise Guide to Building Workflows That Scale

AI Guardrails: How to Govern What AI Agents Can Access, Decide, and Do

What Is AI Agent Sprawl And How to Contain It

What Is Agentic AI Orchestration? An Enterprise Guide