Human-in-the-Loop Workflows: Definition and How to Build Them

Between regulatory mandates like the EU AI Act and growing pressure to show measurable return on investment (ROI), the tolerance for deploying ungoverned AI in production is shrinking.

Human-in-the-loop (HITL) workflows define where autonomous AI action ends, and human judgment begins: they embed human decision points inside the automation flow, not as a separate exception process bolted on afterward.

What follows covers the definition, the case for urgency, and a practical build guide for teams that need oversight to hold up under enterprise conditions

What Human-in-the-Loop Workflows Are

A HITL workflow requires a human to actively approve, edit, or reject an AI output before it produces downstream consequences. The AI suggests; the human decides. Nothing irreversible moves forward without sign-off.

HITL functions as a risk-calibrated design choice rather than a binary toggle. The presence of a human alone doesn't eliminate risk. The design of the human-computer interaction determines whether oversight is meaningful or performative.

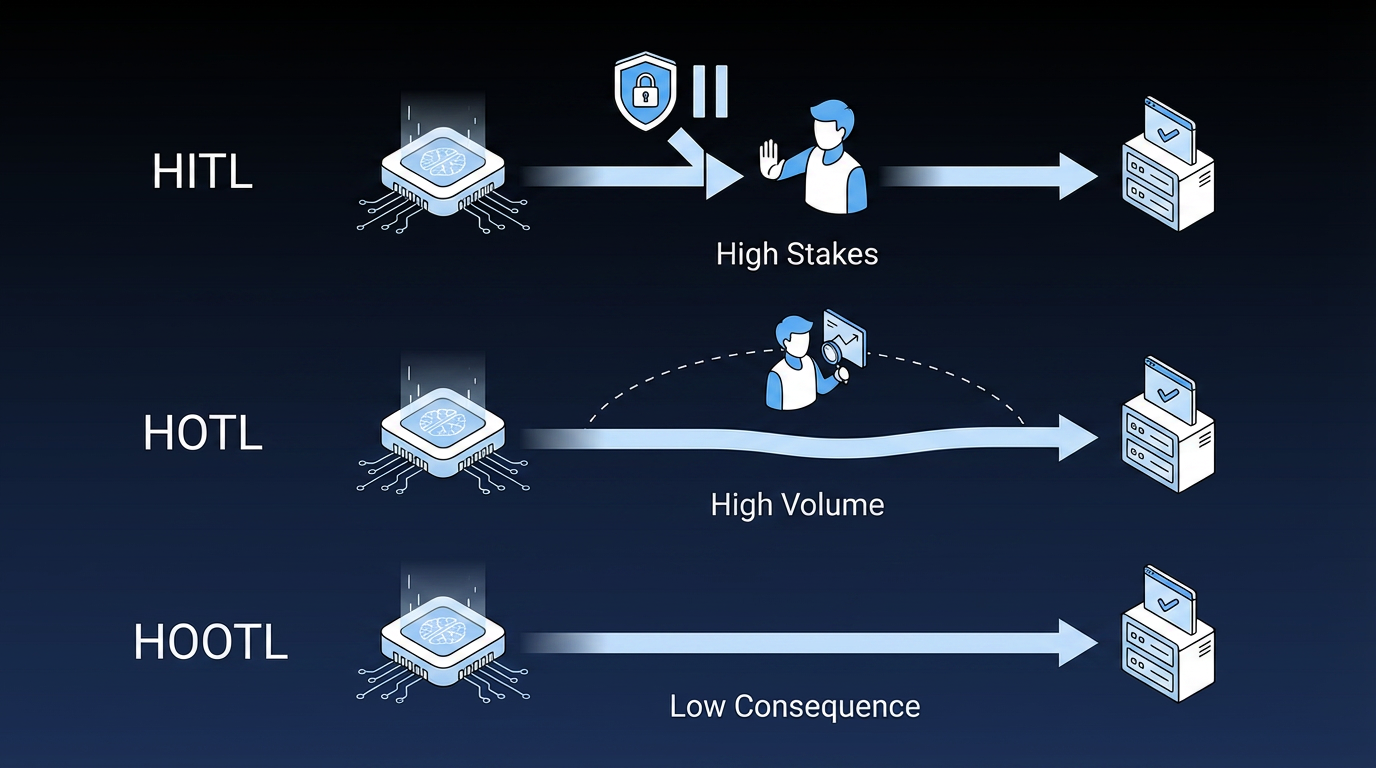

The Human Oversight Taxonomy

Enterprise architects need to distinguish between three oversight models before making any deployment decision.

- Human-in-the-loop (HITL): Enterprises use it for high-stakes, lower-volume decisions: loan approvals, regulated output review, or procurement contract finalization. A human approves or rejects each AI output at critical decision points before the workflow proceeds.

- Human-on-the-loop (HOTL): Enterprises use it for high-volume, auditable workflows such as security alert triage or fraud flagging. The AI operates autonomously, while a human monitors aggregate behavior and retains the ability to override.

- Human-out-of-the-loop (HOOTL): Enterprises reserve it for structured, low-consequence tasks like routine data classification. No human review occurs.

Enterprise teams often deploy all three simultaneously as a risk-stratified architecture, matching the oversight model to each workflow's risk profile.

Why Human-in-the-Loop Workflows Are Non-Negotiable Now

Enterprises are deploying AI agents faster than governance programs can keep pace. Fifty-one percent of organizations using AI experienced at least one negative consequence, with roughly a third of those tied to AI inaccuracy, according to McKinsey. Most enterprises still lack a mature governance model for autonomous AI agents.

These three forces make HITL workflows a structural requirement for high-risk enterprise settings.

- Regulatory mandates are becoming law: EU AI Act Article 14 requires organizations operating high-risk AI systems to design them for effective oversight by natural persons, effective August 2026. NIST IR 8596 states directly that organizations will need human oversight to maintain regulatory and legal compliance. By 2029, 70% of government agencies will require explainable AI and HITL mechanisms for automated citizen-facing decisions, according to Gartner.

- Agentic AI amplifies the consequences of misconfiguration: A misconfigured agent doesn't make one bad decision and stop. It repeats the same bad decision at scale until someone catches it. The difference in consequence between a human mistake and an agent mistake is volume: agents don't get tired or second-guess themselves.

- Governance confidence is low across enterprises: Only 13% of IT application leaders believe their organization has the right governance in place to manage AI agents, according to Gartner.

Each of these forces compounds the others: regulatory deadlines shorten the window, agentic deployment raises the stakes, and low confidence in governance means most organizations are running behind on all three simultaneously.

How to Build Human-in-the-Loop Workflows That Scale

Handing full control to the AI without oversight creates risk. Risk-stratified routing resolves this by matching the oversight model to each decision's consequence and confidence level.

Step 1: Map Workflows and Classify Risk Before Choosing Technology

Audit each business process end-to-end before agent configuration begins. Document all engaged systems, exception cases, and mandatory human intervention points.

AI agents expose ambiguities in business process design that human employees compensate for through institutional knowledge and contextual judgment. An agent won't handle an undefined exception; it will follow incomplete rules and fail visibly. Mapping first prevents that.

Step 2: Design Confidence Threshold Routing

Confidence-threshold routing is the most common HITL pattern. The AI assigns a confidence score to each output. Outputs above the threshold auto-execute; outputs below route to human review.

Calibrate to failure cost, not average accuracy. A model that's highly accurate on average but catastrophically wrong on a small percentage of high-stakes cases needs a different threshold than one with uniform error distribution.

For example, an enterprise might set this routing logic: if the impact score exceeds a dollar threshold or the confidence score falls below a defined band, route to human review. If a preapproved playbook exists and both scores are within tolerance, auto-execute.

Step 3: Implement Tiered Review Rather Than Binary Routing

Binary routing (with or without humans) can be difficult to scale. Graduated escalation hierarchies match case characteristics with appropriate expertise levels. Over-routing simple cases to specialists creates avoidable delays.

- Low risk (auto-execute): Meets the confidence threshold; a preapproved playbook is in place.

- Medium risk (quick verification): Mid-tier confidence, moderate consequence.

- High risk (domain expert review): Low confidence, high consequence, or regulatory classification trigger.

Clear decision boundaries and transparent audit trails matter most in high-risk AI systems.

Step 4: Build Durable Interrupt-and-Resume Infrastructure

HITL workflows that span hours or days need state management. The workflow must pause for human input without losing context, then resume after approval. An agent performing business workflows must store analysis results, business decisions, user input, and error messages across sessions.

Without durable state management, a dropped workflow means lost work, re-processing, and broken trust in the system.

Step 5: Close the Feedback Loop

Treat human decisions as training signals. When a reviewer accepts, rejects, or edits an AI output, that signal should route back to improve the model. Execution errors feed the tool interface layer. Reasoning errors feed the planner's training loop. Environmental fluctuations update context buffers without touching core logic.

Systematic feedback routing turns HITL workflows from a cost center into a continuous improvement mechanism.

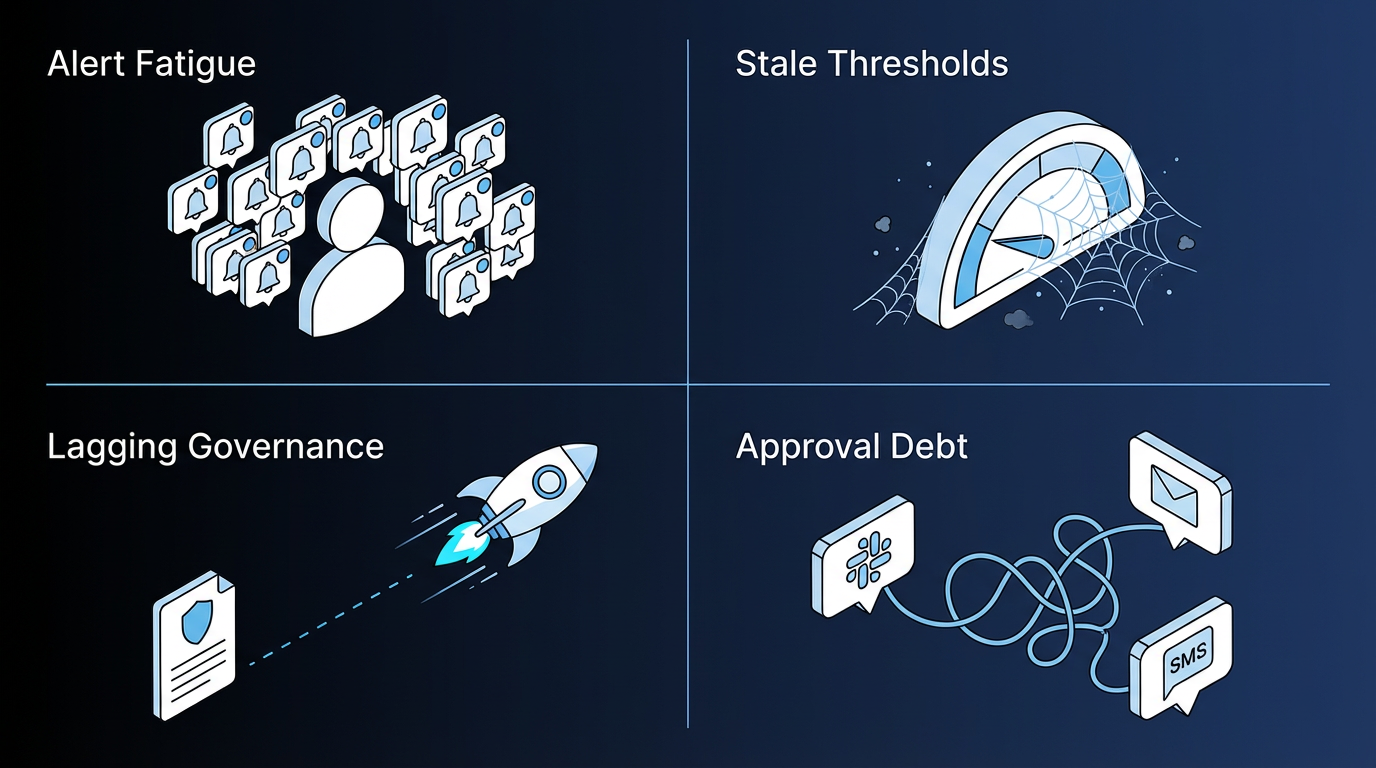

What Degrades Human-in-the-Loop Workflows at Scale

Even well-designed HITL workflows degrade if these failure modes go unaddressed.

- Alert fatigue from over-escalation: When operators set confidence thresholds too low, reviewers receive too many escalations and begin approving without genuine evaluation, defeating the purpose of oversight. Review thresholds on a regular cadence so escalation volume doesn't drift upward until human review becomes performative.

- Stale confidence thresholds: A common failure mode in production AI teams is setting a confidence threshold at deployment and never revisiting it, with no documentation of its rationale. Thresholds should be attached to the action and its consequence, not just to the model output type. Recalibrate regularly so routing logic still reflects the actual failure cost.

- Governance that lags deployment: As systems absorb more traffic and support more teams, policy controls often fail to keep pace with capacity expansion. Effective governance requires cross-functional ownership across legal, compliance, product, security, and engineering, not engineering alone.

- Approval orchestration debt: When approval arrives through different channels for different agents (Slack for one, email for another, a custom interface for a third), the result is multiple approval systems to maintain, each accumulating its own logic debt. Design a unified approval orchestration layer from the start. Every new agent that creates a separate approval path adds maintenance, security, and audit overhead.

All four failure modes share a root cause: governance decisions teams make at deployment and never revisit. Building in scheduled reviews for thresholds, escalation volume, and approval architecture prevents the slow drift that turns a well-designed HITL system into a rubber stamp.

How Elementum Operationalizes Human-in-the-Loop Workflows

HITL workflow scaling often breaks down at the routing architecture level. Throwing more reviewers at the problem only gets you so far when the real lever is the routing architecture.

Our Workflow Engine and AI Agent Orchestration capabilities treat humans, AI agents, and deterministic business rules as equals in every process, through our Three-Participant Model. We assign each step to the right participant: AI agents handle tasks requiring interpretation, deterministic rules handle logic that must produce the same result every time, and humans handle judgment calls with regulatory or material consequences. Human decision points are a native configuration option, not a fallback.

Configurable confidence thresholds with scoring let operators define when AI agents act autonomously and when human intervention is required. Operators can adjust these thresholds at any time post-deployment without rebuilding the workflow. Intelligent routing with flexible approval chains (sequential, parallel, conditional) sends decisions to the right people through the channels they already use: Slack, Microsoft Teams, email, SMS, or in-app.

We log every agent action with a full compliance audit trail that supports SOC 2 Type II, the General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), Sarbanes-Oxley (SOX), and the Health Insurance Portability and Accountability Act (HIPAA).

Our patented Zero Persistence architecture means your data stays yours: we never train on it, never replicate it, and never warehouse it. CloudLinks query data in real time where it already lives: Snowflake, Databricks, BigQuery, and Redshift. Row-level and column-level security policies control exactly what each agent can see and touch.

Pre-integration with OpenAI, Gemini, Anthropic, Amazon Web Services (AWS) Bedrock, and Snowflake Cortex means no large language model (LLM) vendor lock-in. Swap models, agents, and tools without rebuilding workflow logic.

Production deployment runs in 30 to 60 days for scoped workflows. Enterprise teams typically start with one high-risk workflow and expand governance coverage across functions from there.

Working through HITL design for a high-risk workflow, or want to reduce AI agent sprawl through better routing architecture? Contact us to talk through your agentic AI roadmap.

FAQs About Human-in-the-Loop Workflows

These are the questions IT and operations leaders most often raise when evaluating HITL workflow design and governance requirements.

What Is the Difference Between Human-in-the-Loop and Human-on-the-Loop?

HITL requires a human to approve or reject each AI output at critical decision points before the workflow proceeds. HOTL lets AI operate autonomously while a human monitors aggregate behavior and retains the ability to override. Most enterprise organizations deploy both simultaneously, matching the oversight model to each workflow's risk profile.

When Can AI Run Autonomously Without Human Review?

AI can run autonomously when the task is low-consequence, reversible, and the model's confidence score exceeds a validated threshold with a pre-approved playbook in place. High-stakes decisions involving sensitive data, material financial impact, or regulatory classification should route to human review. The threshold is a business decision calibrated to the cost of failure.

Does the EU AI Act Require Human-in-the-Loop Specifically?

EU AI Act Article 14 requires high-risk AI systems to support effective oversight by natural persons, but doesn't prescribe HITL by name. Whether HOTL architectures satisfy the "effective oversight" standard in specific risk categories remains an active area of legal interpretation. A conservative compliance position for high-risk AI categories is HITL until regulators clarify.

How Do You Prevent Human-in-the-Loop from Becoming a Rubber Stamp?

Automation bias can cause reviewers to approve AI outputs without genuine evaluation, which defeats the purpose of human oversight entirely. Calibrate escalation volume so reviewers can genuinely evaluate what they receive. Provide sufficient context for informed judgment rather than a binary approve/reject prompt with no supporting detail. Ensure reviewers have domain expertise appropriate to the decision type.

What Does Human-in-the-Loop Cost, and What Is the ROI?

Direct costs include reviewer time, tooling, and processing latency. The ROI case is both defensive and offensive. Security AI and automation, including governance infrastructure such as HITL, can reduce average breach costs by about $1.9 million and help resolve breaches more quickly, according to IBM. Organizations with strong governance practices demonstrate the value of AI to boards and executives more credibly.

Keep Reading

Human-in-the-Loop Agentic AI: How Enterprise Teams Deploy Agents Without Losing Control

Human-in-the-Loop vs. Human-on-the-Loop: When to Use Each for Enterprise Workflows

AI Guardrails: How to Govern What AI Agents Can Access, Decide, and Do

How to Control and Monitor the Output of AI Agents

What Is AI Agent Sprawl And How to Contain It

Deterministic vs. Probabilistic AI: The Enterprise Guide to Building Workflows That Scale

\